To create Spark Data Frame in spark using R

as.DataFrame(data,numPartitions) – To create spark data frame from local source

createDataFrame(data,numPartitions) – To create spark data frame from local source

read.df(“file:///filepath”,”fileformat”,header = “true”, inferSchema = “true”, na.strings = “NA”) – To create spark data frame from data source

loadDF(“file:///filepath”,”fileformat”, header = “true”, inferSchema = “true”, na.strings = “NA”) – To create spark data frame from data source

numPartitions : Number of partitions to be made in SparkDataFrame

#Set up spark home

Sys.setenv(SPARK_HOME=”…./spark-2.4.0-bin-hadoop2.7″)

.libPaths(c(file.path(Sys.getenv(“SPARK_HOME”), “R”, “lib”), .libPaths()))

#Load the library

library(SparkR)

#Initialize the Spark Context

#To run spark in a local node give master=”local”

sc #Start the SparkSQL Context

sqlContext #Creating spark dataframe from local dataframe

data=read.csv(“/……weight-height.csv”)

df df1=createDataFrame(data,numPartitions=7)

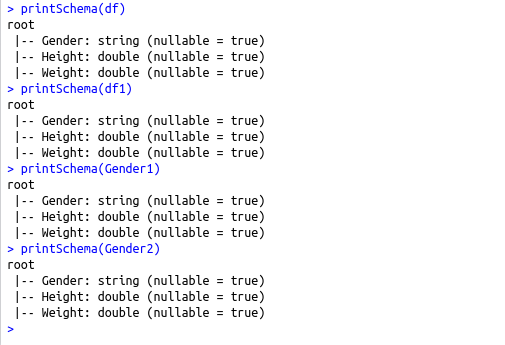

printSchema(df)

printSchema(df1)

#Creating Spark data frame from data sources

Gender1 = read.df(“file:///…./weight-height.csv”,”csv”,header = “true”, inferSchema = “true”, na.strings = “NA”)

Gender2 <-loadDF(“file:///…./weight-height.csv”, “csv”, header = “true”, inferSchema = “true”, na.strings = “NA”)

printSchema(Gender1)

printSchema(Gender2)

#sparkR.session.stop()