To implement pipeline architecture for classification in spark with R using sparklyR package

spark_connect(master = “local”) – To create a spark connection

sdf_copy_to(spark_connection,R object,name) – To copy data to spark environment

sdf_partition(spark_dataframe,partitions with weights,seed) – To partition spark dataframe into multiple groups

ml_pipeline(spark connection) – To create a Spark ML pipeline

ft_r_formula(formula) – To implement the transforms required for fititng a dataset against an R model formula

ml_logistic_regression() – To build a linear regression model

ml_fit(pipeline_model,train_data) – To fit the model

ml_transform(fitted_pipeline,test_data) – To predict the test data

ml_binary_classification_evaluator(predict,label_col,prediction_col) – To evaluate the metrics AUC(Area Under Curve)

#Load the sparklyr library

library(sparklyr)

library(caret)

#Create a spark connection

sc %

ft_r_formula(Gender~.) %>%

ml_logistic_regression()

#Split the data for train and test

partitions=sdf_partition(data_s,training=0.8,test=0.2,seed=111)

train_data=partitions$training

test_data=partitions$test

#Fit the pipeline model

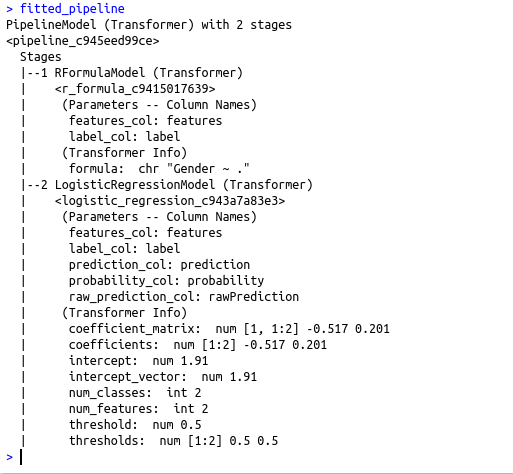

fitted_pipeline fitted_pipeline

#Predict using the test data

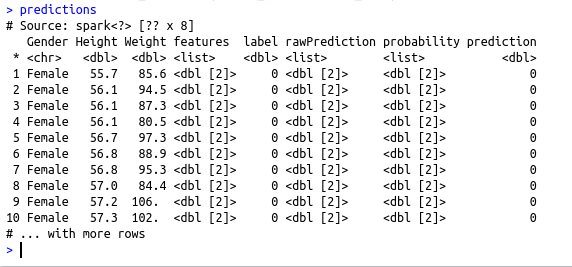

predictions predictions

#Evaluate the metrics AUC

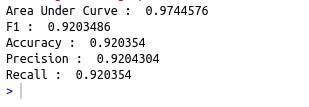

cat(“Area Under Curve : “,ml_binary_classification_evaluator(predictions, label_col =”label”,prediction_col = “prediction”))

cat(“\nF1 : “,ml_multiclass_classification_evaluator(predictions, label_col = “label”,prediction_col = “prediction”))

cat(“\nAccuracy : “,ml_multiclass_classification_evaluator(predictions, label_col = “label”,prediction_col = “prediction”,metric_name=”accuracy”))

cat(“\nPrecision : “,ml_multiclass_classification_evaluator(predictions, label_col = “label”,prediction_col = “prediction”,metric_name=”weightedPrecision”))

cat(“\nRecall : “,ml_multiclass_classification_evaluator(predictions, label_col = “label”,prediction_col = “prediction”,metric_name=”weightedRecall”))