Visual Language Navigation (VLN) navigates a user interface with graphical elements and involves the user recognizing and interacting with the interface elements to move via the user experience. VLN uses icons, menus, buttons, sliders, or other graphical elements.

VLN is communication between the user and the system, helping with interaction. Visual language navigation can also help decrease the user experience complexity and make it more intuitive. VLN is a significant aspect of user experience design and provides users with a visual representation of navigation through a website or application.

VLN also creates a constant and recognizable look and feel, which can help construct brand recognition and trust. Additionally, it improves accessibility, allowing users with visual impairments to better understand and interact with the site or application.

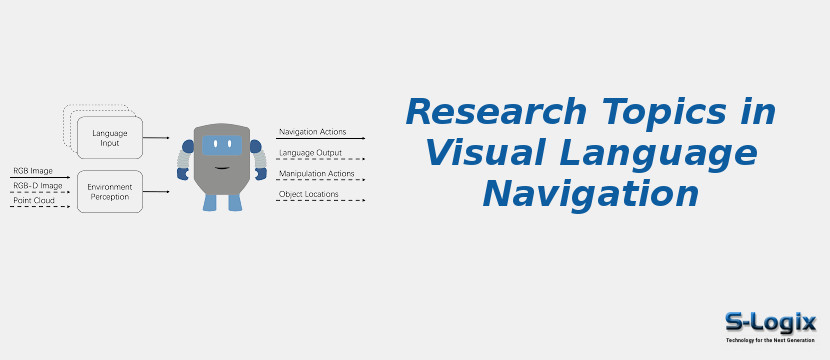

Perception: The VLN agent is outfitted with visual sensors like cameras and depth sensors to capture images or point clouds of the surroundings. The agent can see information about its surroundings thanks to these sensors.

Natural Language Input: The human user gives the agent navigation instructions using natural language. Instructions can be communicated verbally, via text or by other means.

Language Understanding: The VLN system uses natural language processing (NLP) techniques to comprehend and analyze the given instructions. To extract the meaning and intent of the language input, parsing and semantic analysis are required.

Semantic Mapping: A high-level semantic map of the navigation task is created using the interpreted language instructions. The destination, landmarks, items, and spatial relationships indicated in the instructions may all be included on this map.

Environment Representation: A thorough representation of the surroundings is produced by processing the visual data the agents sensors have collected. Depth estimation, scene segmentation, and object recognition could all be a part of this representation.

Multimodal Fusion: VLN systems combine data from language and visual modalities. The semantic map from language understanding and the environmental representation from visual sensors are combined using multimodal fusion techniques.

Navigation Planning: The agent creates a path based on the fused multimodal information. This plan includes choosing how to navigate the surroundings and get to the intended location while following the given directions.

Execution of Actions: Following the created plan, the VLN agent carries out actions to move through the environment. Examples of actions are moving forward, turning, stopping, and interacting with objects or obstacles when needed.

Perception Update: Using sensors, the agent continually changes its perception of everything around it as it relocates through the atmosphere. This enables it to adjust to environmental changes, avoid impediments, and enhance its awareness of the maneuvering task.

Feedback and Interaction: Throughout the navigation process, the agent may communicate with the user, asking for clarification when necessary or offering updates on its progress in case the instructions are unclear or it runs into unforeseen circumstances.

Goal Achievement: The main goal of the VLN agent is to adhere to the given instructions and arrive at the designated location. The agent can evaluate its progress toward the objective by analyzing input from the user and environment.

Evaluation and Performance: VLN systems are assessed according to their capacity to comprehend and obey instructions in natural language, navigate effectively and safely, and adjust to various situations and environments. Metrics and benchmark datasets are frequently employed in evaluation.

Broadly, the objective of VLN systems is to close the gap that differentiates autonomous navigation in virtual or real-world settings and natural language communication. It is a complicated and interdisciplinary field to investigate because achieving this demands integrating techniques from computer vision, natural language processing, machine learning, and robotics.

Convolutional Neural Networks (CNNs):

CNNs are applied to analyze visual inputs and extract relevant features.

Recurrent Neural Networks (RNNs): RNNs are used to model sequences of language instructions and obtain the relationships between words in a sentence.

Attention Mechanisms: This mechanism focuses on relevant parts of an image or a language sequence while making predictions.

Transformer Networks: Transformer Networks is a neural network class that has proven efficiency in several NLP tasks, including VLN.

Reinforcement Learning: Reinforcement Learning is applied to learn from the environment and optimize the agents actions to reach a goal.

These techniques are repeatedly used in amalgamation to form end-to-end models for VLN, where an agent learns to navigate a virtual environment based on natural language instructions.

Easy to Understand:

VLN makes it simpler for users to understand.

User Friendly: VLN is often more user-friendly because it removes the need to read and comprehend text or symbols.

Easier to Remember: As VLN is so uncomplicated, users are more likely to remember the navigation through a website.

Reduces Cognitive Load: VLN depletes the cognitive load on users by imparting them with a visual navigation map.

Improves Usability: By imparting users with a simpler way to navigate a website, VLN can boost the overall usability of a website.

Understanding visual context: AI systems must be able to identify objects and scenes in images and understand the relationship between each other.

Ambiguity and variability: Images can be cryptic or contain multiple interpretations, and there are many variations in visual scenes, making it problematic for AI systems to identify objects and recognize patterns precisely.

Data availability: High-quality annotated training data is critical for constructing effective visual language models, but gathering and labeling huge amounts of data can be time-intensive and resource-consuming.

Transfer learning: Pre-trained models can be fine-tuned for specific tasks, but exchanging knowledge from one domain to another is still challenging.

Interpreting abstract concepts: Visual language navigation also involves understanding abstract concepts and relationships, including causality and time, which can be complex for AI systems to grasp.

Automated Customer Service: VLN can automate customer service interactions, permitting customers to quickly and easily find the answers. Customer service teams can save time and cost by allowing customers to navigate via a visual interface.

Education: VLN can be utilized in educational settings to assist students in understanding complex concepts. Utilizing visuals to examine concepts can make the material simpler to understand and remember.

Web Design: VLN is applied to help users easily find and access content. The visuals guide users through a website; designers can help users find the information quickly.

1. Implement of Natural Language Interfaces: Natural language interfaces allow users to computer interaction using natural language rather than a graphical user interface. Research can be carried out to improve visual language navigation by incorporating natural language interfaces and implementing algorithms to understand natural language commands better.

2. Development of Contextual Navigation: Research can be conducted to inspect the visual language navigation by examining the user-s context and imparting more contextual information to guide their navigation.

3. Advancement for Mobile Platforms: Researchers are exploring how visual language navigation can be boosted by implementing algorithms considering the user-s physical context, including location and orientation.

4. Incorporation of Visual Language Navigation with Other Interfaces: Visual language navigation is also applied for voice- or gesture-based interactions.