To understand how to do word lemmatizing in natural language processing using nltk library.

Sample text.

Import libraries.

Tokenize the sentence in the sample text.

Tokenize the words in every sentences.

Remove the stopwords.

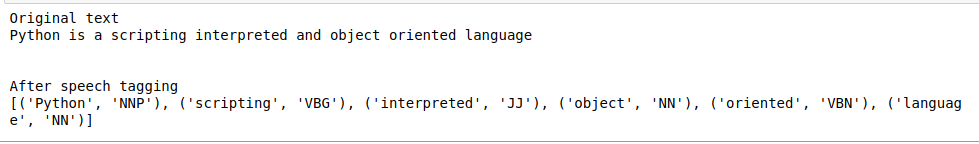

Do speech tagging for words.

Print the results.

import nltk

from nltk.corpus import stopwords

from nltk.tokenize import word_tokenize, sent_tokenize

stop_words = set(stopwords.words(‘english’))

#sample text

text = “Python is a scripting interpreted and object oriented language”

print(“Original text”)

print(text)

def speech_tagging(text):

tokenized = sent_tokenize(text)

for i in tokenized:

#Word tokenizers

wordsList = nltk.word_tokenize(i)

#removing stop words from wordList

wordsList = [w for w in wordsList if not w in stop_words]

# Using a Tagger. Which is part-of-speech

tagged = nltk.pos_tag(wordsList)

print(“\n”)

print(“After speech tagging”)

print(tagged)

speech_tagging(text)