The algorithm for building decision trees is called C4.5. It builds classification in the form of a tree structure. It is implemented based on the MapReduce Framework. In the code given below, the first Mapreduce function finds the occurrences of attribute that is associated with class labels. After identifying the occurrences of attributes, the second Mapreduce function computes the Gain Ratio using information gain and split information of attributes. From the Gain Ratio, the reduce function selects the best attributes and then constructs the decision tree on third Map Reduce Function.

//First MapReduce

//count the attribute for specific class label

Map Phase input:<k1, v1>

k1-line no

v1-records

Extract attribute and class label from instance of the record

k2-attribute with class label

v2-1

output(k2, v2)

Reduce Phase input<k2, List<v2>>

//Counts number of occurrences of combination for attribute with class Label

k3-attribute with class Label

v3-frequency

output(k3, v3)

//Second MapReduce

//Find the best splitting attribute (decision node)

//Calculate Gain Ratio for each attribute

Map Phase input: <k3, v3>

Compute Entropy(k3)

Compute InformationGain(k3)

Compute SplitInfo(k3)

k4-attribute

v4-Entropy,Information and SplitInfomation

Output(k4,v4)

Reduce Phase input:<k4, List<v4>>

//Calculate Information Gain Ratio for each attribute

Highest Gain ratio is the best splitting attribute (decision node)

k5-decision node

v5–Information Gain Ratio

Output(k5,v5)

//Third MapReduce

//Tree construction

Map Phase input:<k5, v5>

//Reads the record of best attribute

//Compute the node id for highest attribute

//Every Node is represented by a list of attribute and its values.

K6-node id

V6-Elements (attribute values)

This process recursively call for creating non leaf branches, until all data is classified.

Output: <k6,v6>

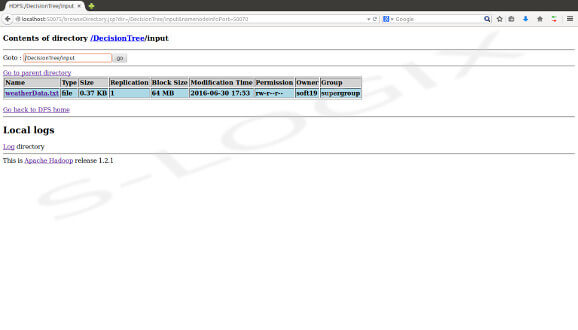

Input-Weather Data

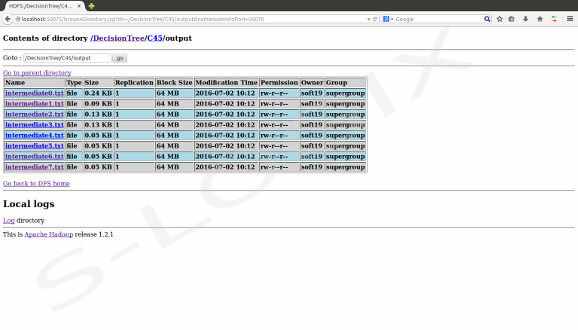

Output-Decision Tree